AI Recognizes Thoughts in Visual Art

Specialists in artificial intelligence have gotten quite superior at making computer systems that can “see” the globe about them — recognizing objects, animals, and pursuits within just their purview. These have come to be the foundational systems for autonomous cars, planes, and stability methods of the upcoming.

But now a group of researchers is doing work to train computer systems to identify not just what objects are in an impression, but how these pictures make people truly feel — i.e., algorithms with emotional intelligence.

“This capacity will be vital to producing synthetic intelligence not just extra clever, but a lot more human, so to discuss,” states Panos Achlioptas, a doctoral applicant in computer science at Stanford College who worked with collaborators in France and Saudi Arabia.

To get to this intention, Achlioptas and his group gathered a new dataset, named ArtEmis, which was just lately released in an arXiv pre-print. The dataset is based on the 81,000 WIkiArt paintings and consists of 440,000 penned responses from above 6,500 human beings indicating how a portray would make them sense — and like explanations of why they chose a specified emotion. Using people responses, Achlioptas and crew, headed by Stanford engineering professor Leonidas Guibas, properly trained neural speakers — AI that responds in written words — that permit desktops to generate emotional responses to visual art and justify those people thoughts in language.

The researchers chose to use artwork particularly, as an artist’s aim is to elicit emotion in the viewer. ArtEmis is effective regardless of the issue make a difference, from nonetheless everyday living to human portraits to abstraction.

The perform is a new strategy in personal computer vision, notes Guibas, a school member of the AI lab and the Stanford Institute for Human-Centered Synthetic Intelligence. “Classical laptop or computer vision capturing operate has been about literal written content,” Guibas suggests. “There are three canine in the image, or another person is drinking espresso from a cup. Instead, we necessary descriptions that defined psychological written content.”

Capturing Emotion

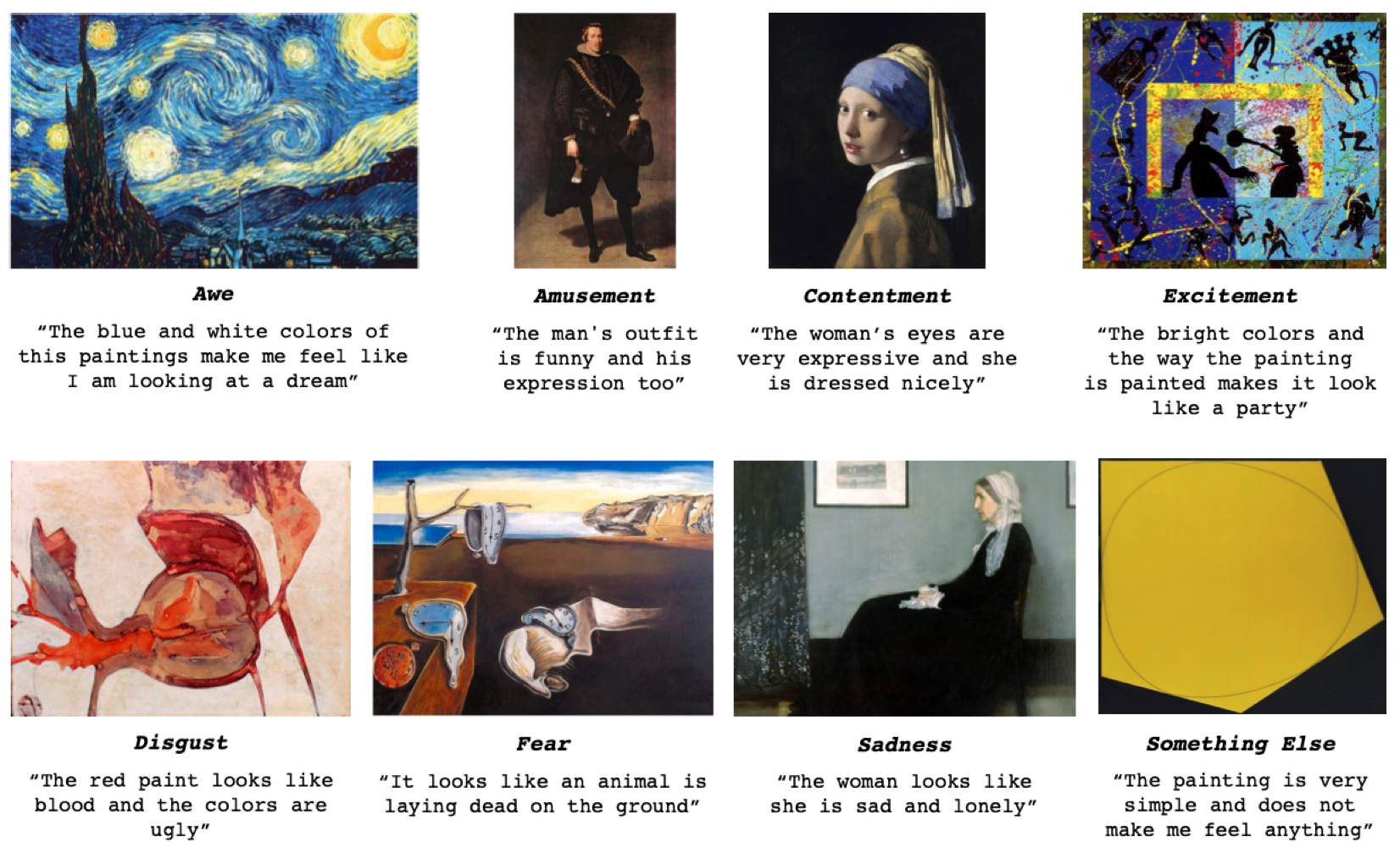

The algorithm categorizes the artist’s do the job into just one of 8 emotional classes — ranging from awe to amusement to concern to unhappiness — and then points out in prepared text what it is in the picture that justifies the psychological study. (See illustrations under. All are paintings evaluated by the algorithm, but which were being not made use of in the schooling routines.)

“The laptop or computer is executing this,” claims Achlioptas. “We can show it a new picture it has by no means seen, and it will notify us how a human may possibly come to feel.”

Remarkably, the researchers say, the captions accurately mirror the abstract content material of the graphic in strategies that go well beyond the capabilities of present computer system eyesight algorithms derived from documentary photographic datasets, these kinds of as Coco.

What is far more, the algorithm does not basically seize the broad emotional working experience of a complete graphic, but it can decipher differing emotions in a given painting. For occasion, in the well-known Rembrandt painting (previously mentioned) of the beheading of John the Baptist, ArtEmis distinguishes not only the suffering on John the Baptist’s severed head, but also the “contentment” on the face of Salome, the girl to whom the head is introduced.

Achlioptas points out that, even while ArtEmis is advanced sufficient to gauge that an artist’s intent can be distinct in the context of a single impression, the tool also accounts for subjectivity and variability of human response, as nicely.

“Not every individual sees and feels the similar matter observing a function of art,” he provides. For instance, “I can come to feel delighted on viewing the Mona Lisa, but Professor Guibas could possibly sense sad. ArtEmis can distinguish these variations.”

An Artist’s Instrument

In the near expression, the scientists anticipate ArtEmis could turn out to be a tool for artists to examine their performs through creation to ensure their work is having the preferred influence.

“It could provide steering and inspiration to ‘steer’ the artist’s operate as wished-for,” Achlioptas claims. A graphic artist doing the job on a new symbol may possibly use ArtEmis to assure it is having the meant emotional outcome, for case in point.

Down the highway, right after further exploration and refinements, Achlioptas can foresee emotion-dependent algorithms encouraging provide emotional recognition to artificial intelligence applications such as chatbots and conversational AI brokers.

“I see ArtEmis bringing insights from human psychology to artificial intelligence,” Achlioptas claims. “I want to make AI much more own and to boost the human expertise with it.”

Stanford HAI’s mission is to advance AI research, schooling, plan and practice to increase the human affliction. Master extra.